24 jan , 2017 LEARNING PHYSICS CONTROL SYSTEM./ State space representation.

https://en.wikipedia.org/wiki/State-space_representation

In

control engineering, a

state-space representation is a mathematical model of a physical system as a set of input, output and state variables related by first-order

differential equations. "

State space"

refers to the space in which the variables on the axes are the state

variables. The state of the system can be represented as a vector within

that space.

To abstract from the number of inputs, outputs and states, these variables are expressed as vectors. Additionally, if the

dynamical system is linear, time-invariant, and finite-dimensional, then the differential and algebraic equations may be written in matrix form.

[1][2]

The state-space method is characterized by significant algebraization

of general system theory, which makes it possible to use Kronecker

vector-matrix structures. The capacity of these structures can be

efficiently applied to research systems with modulation or without it.

[3]

The state-space representation (also known as the "time-domain

approach") provides a convenient and compact way to model and analyze

systems with multiple inputs and outputs. With

inputs and

outputs, we would otherwise have to write down

Laplace transforms

Laplace transforms

to encode all the information about a system. Unlike the frequency

domain approach, the use of the state-space representation is not

limited to systems with linear components and zero initial conditions.

State variables

The internal

state variables are the smallest possible subset of system variables that can represent the entire state of the system at any given time.

[4] The minimum number of state variables required to represent a given system,

,

is usually equal to the order of the system's defining differential

equation. If the system is represented in transfer function form, the

minimum number of state variables is equal to the order of the transfer

function's denominator after it has been reduced to a proper fraction.

It is important to understand that converting a state-space realization

to a transfer function form may lose some internal information about the

system, and may provide a description of a system which is stable, when

the state-space realization is unstable at certain points. In electric

circuits, the number of state variables is often, though not always, the

same as the number of energy storage elements in the circuit such as

capacitors and

inductors.

The state variables defined must be linearly independent, i.e., no

state variable can be written as a linear combination of the other state

variables or the system will not be able to be solved.

Linear systems

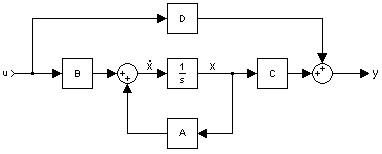

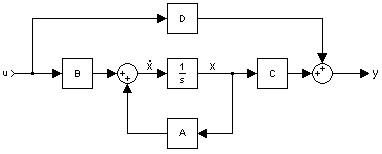

Block diagram representation of the linear state-space equations

The most general state-space representation of a linear system with

inputs,

outputs and

state variables is written in the following form:

[5]

where:

is called the "state vector",

is called the "state vector",  ;

; is called the "output vector",

is called the "output vector",  ;

; is called the "input (or control) vector",

is called the "input (or control) vector",  ;

; is the "state (or system) matrix",

is the "state (or system) matrix", ![\operatorname {dim} [A(\cdot )]=n\times n](https://wikimedia.org/api/rest_v1/media/math/render/svg/cdb067922e2d625e2d0aaab3b6e54239cdd4f759) ,

, is the "input matrix",

is the "input matrix", ![\operatorname {dim} [B(\cdot )]=n\times p](https://wikimedia.org/api/rest_v1/media/math/render/svg/e273a2878042f414ada7b454096d2d66125257a6) ,

, is the "output matrix",

is the "output matrix", ![\operatorname {dim} [C(\cdot )]=q\times n](https://wikimedia.org/api/rest_v1/media/math/render/svg/6aa4ac576864f9f09ea580fb19bd480fd12a68be) ,

, is the "feedthrough (or feedforward) matrix" (in cases where the system model does not have a direct feedthrough,

is the "feedthrough (or feedforward) matrix" (in cases where the system model does not have a direct feedthrough,  is the zero matrix),

is the zero matrix), ![\operatorname {dim} [D(\cdot )]=q\times p](https://wikimedia.org/api/rest_v1/media/math/render/svg/759072ec7232e15a1823aec56b668df529d57dee) ,

, .

.

In this general formulation, all matrices are allowed to be

time-variant (i.e. their elements can depend on time); however, in the

common

LTI case, matrices will be time invariant. The time variable

can be continuous (e.g.

) or discrete (e.g.

). In the latter case, the time variable

is usually used instead of

.

Hybrid systems

allow for time domains that have both continuous and discrete parts.

Depending on the assumptions taken, the state-space model representation

can assume the following forms:

| System type |

State-space model |

| Continuous time-invariant |

|

| Continuous time-variant |

|

| Explicit discrete time-invariant |

|

| Explicit discrete time-variant |

|

Laplace domain of

continuous time-invariant |

|

Z-domain of

discrete time-invariant |

|

Example: continuous-time LTI case

Stability and natural response characteristics of a continuous-time

LTI system (i.e., linear with matrices that are constant with respect to time) can be studied from the

eigenvalues of the matrix

A. The stability of a time-invariant state-space model can be determined by looking at the system's

transfer function in factored form. It will then look something like this:

The denominator of the transfer function is equal to the

characteristic polynomial found by taking the

determinant of

,

The roots of this polynomial (the

eigenvalues) are the system transfer function's

poles (i.e., the

singularities where the transfer function's magnitude is unbounded). These poles can be used to analyze whether the system is

asymptotically stable or

marginally stable. An alternative approach to determining stability, which does not involve calculating eigenvalues, is to analyze the system's

Lyapunov stability.

The zeros found in the numerator of

can similarly be used to determine whether the system is

minimum phase.

The system may still be

input–output stable (see

BIBO stable)

even though it is not internally stable. This may be the case if

unstable poles are canceled out by zeros (i.e., if those singularities

in the transfer function are

removable).

Controllability

State controllability condition implies that it is possible – by

admissible inputs – to steer the states from any initial value to any

final value within some finite time window. A continuous time-invariant

linear state-space model is

controllable if and only if

where

rank is the number of linearly independent rows in a matrix, and where

n is the number of state variables.

Observability

Observability is a measure for how well internal states of a system

can be inferred by knowledge of its external outputs. The observability

and controllability of a system are mathematical duals (i.e., as

controllability provides that an input is available that brings any

initial state to any desired final state, observability provides that

knowing an output trajectory provides enough information to predict the

initial state of the system).

A continuous time-invariant linear state-space model is

observable if and only if

Transfer function

The "

transfer function" of a continuous time-invariant linear state-space model can be derived in the following way:

First, taking the

Laplace transform of

yields

Next, we simplify for

, giving

and thus

Substituting for

in the output equation

giving

giving

The

transfer function

is defined as the ratio of the output to the input of a system considering its initial conditions to be zero (

).

However, the ratio of a vector to a vector does not exist, so we

consider the following condition satisfied by the transfer function

comparison with the equation for

above gives

Clearly

must have

by

dimensionality, and thus has a total of

elements. So for every input there are

transfer functions with one for each output. This is why the

state-space representation can easily be the preferred choice for

multiple-input, multiple-output (MIMO) systems. The

Rosenbrock system matrix provides a bridge between the state-space representation and its

transfer function.

Canonical realizations

Any given transfer function which is

strictly proper

can easily be transferred into state-space by the following approach

(this example is for a 4-dimensional, single-input, single-output

system):

Given a transfer function, expand it to reveal all coefficients in

both the numerator and denominator. This should result in the following

form:

The coefficients can now be inserted directly into the state-space model by the following approach:

This state-space realization is called

controllable canonical form

because the resulting model is guaranteed to be controllable (i.e.,

because the control enters a chain of integrators, it has the ability to

move every state).

The transfer function coefficients can also be used to construct another type of canonical form

This state-space realization is called

observable canonical form

because the resulting model is guaranteed to be observable (i.e.,

because the output exits from a chain of integrators, every state has an

effect on the output).

Proper transfer functions

Transfer functions which are only

proper (and not

strictly proper)

can also be realised quite easily. The trick here is to separate the

transfer function into two parts: a strictly proper part and a constant.

The strictly proper transfer function can then be transformed into a

canonical state-space realization using techniques shown above. The

state-space realization of the constant is trivially

. Together we then get a state-space realization with matrices

A,

B and

C determined by the strictly proper part, and matrix

D determined by the constant.

Here is an example to clear things up a bit:

which yields the following controllable realization

Notice how the output also depends directly on the input. This is due to the

constant in the transfer function.

Feedback

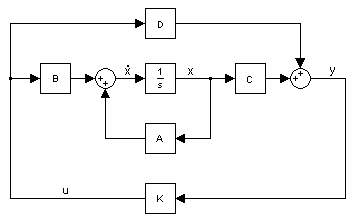

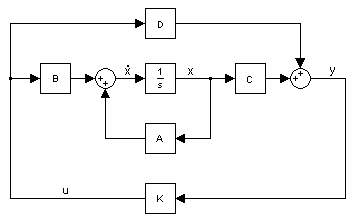

Typical state-space model with feedback

A common method for feedback is to multiply the output by a matrix

K and setting this as the input to the system:

. Since the values of

K are unrestricted the values can easily be negated for

negative feedback.

The presence of a negative sign (the common notation) is merely a

notational one and its absence has no impact on the end results.

becomes

solving the output equation for

and substituting in the state equation results in

The advantage of this is that the

eigenvalues of

A can be controlled by setting

K appropriately through eigendecomposition of

. This assumes that the closed-loop system is

controllable or that the unstable eigenvalues of

A can be made stable through appropriate choice of

K.

Example

For a strictly proper system

D equals zero. Another fairly common situation is when all states are outputs, i.e.

y =

x, which yields

C =

I, the

Identity matrix. This would then result in the simpler equations

This reduces the necessary eigendecomposition to just

.

Feedback with setpoint (reference) input

Output feedback with set point

In addition to feedback, an input,

, can be added such that

.

becomes

solving the output equation for

and substituting in the state equation results in

One fairly common simplification to this system is removing

D, which reduces the equations to

Moving object example

A classical linear system is that of one-dimensional movement of an object.

Newton's laws of motion for an object moving horizontally on a plane and attached to a wall with a spring

where

is position;

is position;  is velocity;

is velocity;  is acceleration

is acceleration is an applied force

is an applied force is the viscous friction coefficient

is the viscous friction coefficient is the spring constant

is the spring constant is the mass of the object

is the mass of the object

The state equation would then become

![\left[{\begin{matrix}\mathbf {{\dot {x}}_{1}} (t)\\\mathbf {{\dot {x}}_{2}} (t)\end{matrix}}\right]=\left[{\begin{matrix}0&1\\-{\frac {k}{m}}&-{\frac {b}{m}}\end{matrix}}\right]\left[{\begin{matrix}\mathbf {x_{1}} (t)\\\mathbf {x_{2}} (t)\end{matrix}}\right]+\left[{\begin{matrix}0\\{\frac {1}{m}}\end{matrix}}\right]\mathbf {u} (t)](https://wikimedia.org/api/rest_v1/media/math/render/svg/e861dcd554e6e9131d93d443184570672febd3b8)

![\mathbf {y} (t)=\left[{\begin{matrix}1&0\end{matrix}}\right]\left[{\begin{matrix}\mathbf {x_{1}} (t)\\\mathbf {x_{2}} (t)\end{matrix}}\right]](https://wikimedia.org/api/rest_v1/media/math/render/svg/089622c024d88e55fb7da811b54574235a1af4a1)

where

represents the position of the object

represents the position of the object is the velocity of the object

is the velocity of the object is the acceleration of the object

is the acceleration of the object- the output

is the position of the object

is the position of the object

The

controllability test is then

![\left[{\begin{matrix}B&AB\end{matrix}}\right]=\left[{\begin{matrix}\left[{\begin{matrix}0\\{\frac {1}{m}}\end{matrix}}\right]&\left[{\begin{matrix}0&1\\-{\frac {k}{m}}&-{\frac {b}{m}}\end{matrix}}\right]\left[{\begin{matrix}0\\{\frac {1}{m}}\end{matrix}}\right]\end{matrix}}\right]=\left[{\begin{matrix}0&{\frac {1}{m}}\\{\frac {1}{m}}&-{\frac {b}{m^{2}}}\end{matrix}}\right]](https://wikimedia.org/api/rest_v1/media/math/render/svg/ffd25c894f682f51d16451d0576a27a6db76813d)

which has full rank for all

and

.

The

observability test is then

![\left[{\begin{matrix}C\\CA\end{matrix}}\right]=\left[{\begin{matrix}\left[{\begin{matrix}1&0\end{matrix}}\right]\\\left[{\begin{matrix}1&0\end{matrix}}\right]\left[{\begin{matrix}0&1\\-{\frac {k}{m}}&-{\frac {b}{m}}\end{matrix}}\right]\end{matrix}}\right]=\left[{\begin{matrix}1&0\\0&1\end{matrix}}\right]](https://wikimedia.org/api/rest_v1/media/math/render/svg/60755dc5ccc362908dc8405dfc3f54922b5ae0be)

which also has full rank. Therefore, this system is both controllable and observable.

Nonlinear systems

The more general form of a state-space model can be written as two functions.

The first is the state equation and the latter is the output equation. If the function

is a linear combination of states and inputs then the equations can be written in matrix notation like above. The

argument to the functions can be dropped if the system is unforced (i.e., it has no inputs).

Pendulum example

A classic nonlinear system is a simple unforced

pendulum

where

is the angle of the pendulum with respect to the direction of gravity

is the angle of the pendulum with respect to the direction of gravity is the mass of the pendulum (pendulum rod's mass is assumed to be zero)

is the mass of the pendulum (pendulum rod's mass is assumed to be zero) is the gravitational acceleration

is the gravitational acceleration is coefficient of friction at the pivot point

is coefficient of friction at the pivot point is the radius of the pendulum (to the center of gravity of the mass

is the radius of the pendulum (to the center of gravity of the mass  )

)

The state equations are then

where

is the angle of the pendulum

is the angle of the pendulum is the rotational velocity of the pendulum

is the rotational velocity of the pendulum is the rotational acceleration of the pendulum

is the rotational acceleration of the pendulum

Instead, the state equation can be written in the general form

The

equilibrium/

stationary points of a system are when

and so the equilibrium points of a pendulum are those that satisfy

for integers

n.

See also

References

Katalin M. Hangos; R. Lakner & M. Gerzson (2001). Intelligent Control Systems: An Introduction with Examples. Springer. p. 254. ISBN 978-1-4020-0134-5.

Katalin M. Hangos; József Bokor & Gábor Szederkényi (2004). Analysis and Control of Nonlinear Process Systems. Springer. p. 25. ISBN 978-1-85233-600-4.

Vasilyev A.S.; Ushakov A.V. (2015). "Modeling of dynamic systems with modulation by means of Kronecker vector-matrix representation.". Scientific and Technical Journal of Information Technologies, Mechanics and Optics. 15 (5): 839–848.

Nise, Norman S. (2010). Control Systems Engineering (6th ed.). John Wiley & Sons, Inc. ISBN 978-0-470-54756-4.

- Brogan, William L. (1974). Modern Control Theory (1st ed.). Quantum Publishers, Inc. p. 172.

Further reading

- Antsaklis, P. J.; Michel, A. N. (2007). A Linear Systems Primer. Birkhauser. ISBN 978-0-8176-4460-4.

- Chen, Chi-Tsong (1999). Linear System Theory and Design (3rd ed.). Oxford University Press. ISBN 0-19-511777-8.

- Khalil, Hassan K. (2001). Nonlinear Systems (3rd ed.). Prentice Hall. ISBN 0-13-067389-7.

- Hinrichsen, Diederich; Pritchard, Anthony J. (2005). Mathematical Systems Theory I, Modelling, State Space Analysis, Stability and Robustness. Springer. ISBN 978-3-540-44125-0.

- Sontag, Eduardo D. (1999). Mathematical Control Theory: Deterministic Finite Dimensional Systems (PDF) (2nd ed.). Springer. ISBN 0-387-98489-5. Retrieved June 28, 2012.

- Friedland, Bernard (2005). Control System Design: An Introduction to State-Space Methods. Dover. ISBN 0-486-44278-0.

- Zadeh, Lotfi A.; Desoer, Charles A. (1979). Linear System Theory. Krieger Pub Co. ISBN 978-0-88275-809-1.

- On the applications of state-space models in econometrics

- Durbin, J.; Koopman, S. (2001). Time series analysis by state space methods. Oxford, UK: Oxford University Press. ISBN 978-0-19-852354-3.

inputs and

inputs and  outputs, we would otherwise have to write down

outputs, we would otherwise have to write down  Laplace transforms

to encode all the information about a system. Unlike the frequency

domain approach, the use of the state-space representation is not

limited to systems with linear components and zero initial conditions.

Laplace transforms

to encode all the information about a system. Unlike the frequency

domain approach, the use of the state-space representation is not

limited to systems with linear components and zero initial conditions. ,

is usually equal to the order of the system's defining differential

equation. If the system is represented in transfer function form, the

minimum number of state variables is equal to the order of the transfer

function's denominator after it has been reduced to a proper fraction.

It is important to understand that converting a state-space realization

to a transfer function form may lose some internal information about the

system, and may provide a description of a system which is stable, when

the state-space realization is unstable at certain points. In electric

circuits, the number of state variables is often, though not always, the

same as the number of energy storage elements in the circuit such as capacitors and inductors.

The state variables defined must be linearly independent, i.e., no

state variable can be written as a linear combination of the other state

variables or the system will not be able to be solved.

,

is usually equal to the order of the system's defining differential

equation. If the system is represented in transfer function form, the

minimum number of state variables is equal to the order of the transfer

function's denominator after it has been reduced to a proper fraction.

It is important to understand that converting a state-space realization

to a transfer function form may lose some internal information about the

system, and may provide a description of a system which is stable, when

the state-space realization is unstable at certain points. In electric

circuits, the number of state variables is often, though not always, the

same as the number of energy storage elements in the circuit such as capacitors and inductors.

The state variables defined must be linearly independent, i.e., no

state variable can be written as a linear combination of the other state

variables or the system will not be able to be solved. inputs,

inputs,  outputs and

outputs and  state variables is written in the following form:[5]

state variables is written in the following form:[5] can be continuous (e.g.

can be continuous (e.g.  ) or discrete (e.g.

) or discrete (e.g.  ). In the latter case, the time variable

). In the latter case, the time variable  is usually used instead of

is usually used instead of  . Hybrid systems

allow for time domains that have both continuous and discrete parts.

Depending on the assumptions taken, the state-space model representation

can assume the following forms:

. Hybrid systems

allow for time domains that have both continuous and discrete parts.

Depending on the assumptions taken, the state-space model representation

can assume the following forms: ,

, can similarly be used to determine whether the system is minimum phase.

can similarly be used to determine whether the system is minimum phase. , giving

, giving in the output equation

in the output equation is defined as the ratio of the output to the input of a system considering its initial conditions to be zero (

is defined as the ratio of the output to the input of a system considering its initial conditions to be zero ( ).

However, the ratio of a vector to a vector does not exist, so we

consider the following condition satisfied by the transfer function

).

However, the ratio of a vector to a vector does not exist, so we

consider the following condition satisfied by the transfer function above gives

above gives must have

must have  by

by  dimensionality, and thus has a total of

dimensionality, and thus has a total of  elements. So for every input there are

elements. So for every input there are  transfer functions with one for each output. This is why the

state-space representation can easily be the preferred choice for

multiple-input, multiple-output (MIMO) systems. The Rosenbrock system matrix provides a bridge between the state-space representation and its transfer function.

transfer functions with one for each output. This is why the

state-space representation can easily be the preferred choice for

multiple-input, multiple-output (MIMO) systems. The Rosenbrock system matrix provides a bridge between the state-space representation and its transfer function. . Together we then get a state-space realization with matrices A, B and C determined by the strictly proper part, and matrix D determined by the constant.

. Together we then get a state-space realization with matrices A, B and C determined by the strictly proper part, and matrix D determined by the constant. constant in the transfer function.

constant in the transfer function. . Since the values of K are unrestricted the values can easily be negated for negative feedback.

The presence of a negative sign (the common notation) is merely a

notational one and its absence has no impact on the end results.

. Since the values of K are unrestricted the values can easily be negated for negative feedback.

The presence of a negative sign (the common notation) is merely a

notational one and its absence has no impact on the end results. and substituting in the state equation results in

and substituting in the state equation results in . This assumes that the closed-loop system is controllable or that the unstable eigenvalues of A can be made stable through appropriate choice of K.

. This assumes that the closed-loop system is controllable or that the unstable eigenvalues of A can be made stable through appropriate choice of K. .

. , can be added such that

, can be added such that  .

. and substituting in the state equation results in

and substituting in the state equation results in and

and  .

. is a linear combination of states and inputs then the equations can be written in matrix notation like above. The

is a linear combination of states and inputs then the equations can be written in matrix notation like above. The  argument to the functions can be dropped if the system is unforced (i.e., it has no inputs).

argument to the functions can be dropped if the system is unforced (i.e., it has no inputs). and so the equilibrium points of a pendulum are those that satisfy

and so the equilibrium points of a pendulum are those that satisfy

![\operatorname {dim} [A(\cdot )]=n\times n](https://wikimedia.org/api/rest_v1/media/math/render/svg/cdb067922e2d625e2d0aaab3b6e54239cdd4f759)

![\operatorname {dim} [B(\cdot )]=n\times p](https://wikimedia.org/api/rest_v1/media/math/render/svg/e273a2878042f414ada7b454096d2d66125257a6)

![\operatorname {dim} [C(\cdot )]=q\times n](https://wikimedia.org/api/rest_v1/media/math/render/svg/6aa4ac576864f9f09ea580fb19bd480fd12a68be)

![\operatorname {dim} [D(\cdot )]=q\times p](https://wikimedia.org/api/rest_v1/media/math/render/svg/759072ec7232e15a1823aec56b668df529d57dee)

![\left[{\begin{matrix}\mathbf {{\dot {x}}_{1}} (t)\\\mathbf {{\dot {x}}_{2}} (t)\end{matrix}}\right]=\left[{\begin{matrix}0&1\\-{\frac {k}{m}}&-{\frac {b}{m}}\end{matrix}}\right]\left[{\begin{matrix}\mathbf {x_{1}} (t)\\\mathbf {x_{2}} (t)\end{matrix}}\right]+\left[{\begin{matrix}0\\{\frac {1}{m}}\end{matrix}}\right]\mathbf {u} (t)](https://wikimedia.org/api/rest_v1/media/math/render/svg/e861dcd554e6e9131d93d443184570672febd3b8)

![\mathbf {y} (t)=\left[{\begin{matrix}1&0\end{matrix}}\right]\left[{\begin{matrix}\mathbf {x_{1}} (t)\\\mathbf {x_{2}} (t)\end{matrix}}\right]](https://wikimedia.org/api/rest_v1/media/math/render/svg/089622c024d88e55fb7da811b54574235a1af4a1)

![\left[{\begin{matrix}B&AB\end{matrix}}\right]=\left[{\begin{matrix}\left[{\begin{matrix}0\\{\frac {1}{m}}\end{matrix}}\right]&\left[{\begin{matrix}0&1\\-{\frac {k}{m}}&-{\frac {b}{m}}\end{matrix}}\right]\left[{\begin{matrix}0\\{\frac {1}{m}}\end{matrix}}\right]\end{matrix}}\right]=\left[{\begin{matrix}0&{\frac {1}{m}}\\{\frac {1}{m}}&-{\frac {b}{m^{2}}}\end{matrix}}\right]](https://wikimedia.org/api/rest_v1/media/math/render/svg/ffd25c894f682f51d16451d0576a27a6db76813d)

![\left[{\begin{matrix}C\\CA\end{matrix}}\right]=\left[{\begin{matrix}\left[{\begin{matrix}1&0\end{matrix}}\right]\\\left[{\begin{matrix}1&0\end{matrix}}\right]\left[{\begin{matrix}0&1\\-{\frac {k}{m}}&-{\frac {b}{m}}\end{matrix}}\right]\end{matrix}}\right]=\left[{\begin{matrix}1&0\\0&1\end{matrix}}\right]](https://wikimedia.org/api/rest_v1/media/math/render/svg/60755dc5ccc362908dc8405dfc3f54922b5ae0be)

No comments:

Post a Comment